Auditory Masking

Thursday, December 22nd, 2011

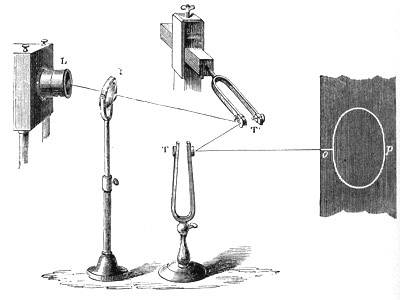

In the previous post I mentioned the importance of level matching when comparing audio equipment with differing amounts of distortion. Implicit was the assumption that the loudness of the two levels would be matched as long as the RMS levels were made equal. Unfortunately, a psychoacoustic phenomena called auditory masking comes into play to ruin this simple picture. Many people know that in order to double the perceived loudness of a single tone, you must increase the power by approximately ten times (+10dB). However, to double the perceived loudness of a tone by adding a second tone at a much higher frequency, you need only double the power (+3dB)! The reason for this is the compression from auditory masking in the cochlea.

What does this mean for level matching in the presence of distortion? It means that to double the perceived loudness of a single tone it will take +10dB from a “distortionless” amplifier and less than +10dB from an amplifier with distortion! This is one of the reasons that tube amplifiers are perceived as having higher power than a comparable solid-state design: The masking effect of our hearing applies less compression to the distorted sound, as the energy is spread over a broader frequency range. Does this mean tube amps are inherently flawed, inaccurate, etc? No, like they say “The proof of the pudding is in the eating.” No amount of hand-waving from audio purists will ever sway some folks from their tube amps and there’s nothing wrong with that.

There are mathematical models available for this compression process, but when coupled with the typical transfer functions of real audio equipment (not just the simple single transistor example I gave in the previous post), there are no nice closed-form solutions that can be used for accurate auditory level matching. This is something that could be done with simulation however. It might be worthwhile trying it to see what types of distortion mechanisms give the highest level of perceived loudness versus RMS level (it seems like higher order distortion would, but this is known to give poor sound quality – I suppose it is loud though). Overall, the best solution for now is the classic one: Live with a piece of gear for a while to get a good feel for it.